From IC Insider CAE USA Inc.

By Colonel Terry Guild, United States Army Retired. Director Intelligence and Geospatial Business Development, CAE USA Inc.

Probability vs. Augmented Reality ~ Intelligence Analysis in a User‑Defined Digital Ecosystem

Rapid advancements in 3D modeling and simulation (M&S), digital technologies supporting immersive synthetic environment development, augmented reality (AR), and game engines could be a catalyst, advancing intelligence analytic tradecraft.

These developments, applied with a robust methodological framework, could enable intelligence analysts to visualize changes in a user-defined digital ecosystem. This ecosystem could reduce the “fog” of information and assist intelligence professionals to develop and refine the most accurate assessments and threat pictures.

Intelligence professionals constantly deal with ambiguity, uncertainty, and the reliability of information. Translating these uncertainties to an AR environment is even more complex; there is no one solution or advanced technology that will fully advance analytic tradecraft into an immersive environment.

The “Fog” of Information

Intelligence professionals are bombarded with information from multiple sources across multiple domains. These data sources continue to rapidly expand because of increased sensor development, technical advancements, and increased globalization of information, among other factors.

Analysts at all levels are challenged by this data overload. Their challenge to triage, ingest, assess, and make sound judgments on information is daunting and complex, which increases the probability of missing either critical information or a connection/linkage that could change the outcome of threat understanding and awareness.

Data overload also significantly increases the fog of information, making assessments, judgments, and findings more complex and questionable.

All-Source Intelligence Fusion

In response to data overload, the Intelligence Community and military services have focused on developing all‑source intelligence fusion tools, including artificial intelligence and machine learning (AI/ML) development, which assist analysts to search, triage, visualize, and link disparate structured and unstructured data in a geospatially referenced context.

These all-source intelligence fusion tools are intended to assist the analyst in making judgments and assessments to transform the fog of information into well-informed intelligence assessments. These intelligence assessments focus on the prediction of events, accurately identifying adversary intentions, and providing focus to the operational environment for commanders and staff.

Digital Ecosystem

CAE USA’s Digital Ecosystem

In Joint Publication (JP) 2.0, Joint Intelligence, the author succinctly outlines the nature of intelligence by stating, “The management and integration of intelligence into military operations are inherent responsibilities of command. Information is of greatest value when it contributes to the commander’s decision-making process by providing reasoned insight into future conditions or situations.”

In Joint Publication (JP) 2.0, Joint Intelligence, the author succinctly outlines the nature of intelligence by stating, “The management and integration of intelligence into military operations are inherent responsibilities of command. Information is of greatest value when it contributes to the commander’s decision-making process by providing reasoned insight into future conditions or situations.”

What if intelligence analysts had the technology to create user-defined digital ecosystems with capabilities to use vast and multiple all-source information based on structured and unstructured data?

This user-defined digital ecosystem could effectively bridge the written word (structured and unstructured) into a physical, augmented environment that enables the analyst to play out threat scenarios, use disparate information to show linkages in a geospatial environment, show commonalities and differences, paint a picture for advanced assessments and analysis, and as JP 2.0 outlines, provide reasoned insight into future conditions or situations.

As a young all-source analyst at the Joint Staff J2, I tried to envision scenarios based on an overwhelming amount of data I physically sorted through, trying to answer the what-ifs, when, why, how, and impacts of events based on disparate indicators and information that most of the time did not seem linked.

Currently, intelligence professionals at all echelons do not have the capability to exploit technological advances in 3D, synthetic environments, and game engine advancements to predict and visualize threat information. The future is enabling analysts to better understand the massive amounts of all‑source data that is collected and processed 24 hours a day, 365 days a year.

Recently, the United States Geospatial Intelligence Foundation (USGIF) Modeling, Simulation and Gaming Working Group published a technical paper titled “Advancing the Interoperability of Geospatial Intelligence Tradecraft with 3D Modeling, Simulation, and Game Engines.”

The objective of the paper was “focused on identifying technology trends that are influencing the convergence of GEOINT and M&S tradecraft.”

The paper also called out several compelling research topics, including new approaches and methods of integrating 3D/4D analytics within mission planning and mission rehearsal training environments, “geo-registration of 3D point cloud features into 3D scenes to enhance contextual analysis and situational understanding,” “AI/ML approaches for contextual-based tipping and cueing of threats,” and more.

While not specified, all the research topics outlined would support the advancement of intelligence analysis, planning, operations, and the development of new tradecraft capabilities across the Intelligence Community—from strategic intelligence to the most tactical application of intelligence analysis in support of the United States Government.

CAE USA Inc. (CAE) is expanding the thesis of GEOINT and M&S tradecraft convergence by applying these concepts for intelligence analysis, operations, planning, and the development of a Common Intelligence Picture (CIP).

The Future of Intelligence Analysis

The Intelligence Community does not appear to be fully exploring and thinking out of the box about how to take advantage of the rapid expansion of technological advancements for the advancement of intelligence analysis.

Colonel Brendan Cook, Royal Canadian Air Force, touched on this topic in his 2019 Air War College research/proposal titled “Augmented Reality, Collaborative and Analytic Tools for ISR Operations.”

The paper’s premise is that the growth of ISR operations has led to a massive increase in collected data. However, converting the data into understanding or increased situational awareness is not being achieved.

Colonel Cook proposes a methodological solution and charts a path for the development of AR tools for collaboration and analysis in ISR operations. Surprisingly, research about advancing intelligence analysis with a digital ecosystem is extremely limited.

Commanders are faced with data overload and lack an integrated CIP to visualize the threat and battlespace. Currently, there is not a technical solution to share a visualized common threat picture across echelons of command.

Similarly, commanders do not have the capability to visualize a common operating picture (COP); integrating a COP and CIP is not on the horizon even though the technology is available. However, CAE is currently working toward a solution.

Our approach is additive to current efforts, building a user-defined digital ecosystem for advanced intelligence analysis, increasing our predictive space while adding to a COP and CIP.

United States Special Operations Command (USSOCOM) Mission Command

As an example, CAE is supporting USSOCOM with the design and architectural development of their Mission Command System and, more specifically, their COP.

CAE is leading the overall design and integrating best-of-breed technologies with a modular, open architecture design, enabling deliberate and quick integration of the applications required to support USSOCOM’s mission command requirements.

CAE is leading the overall design and integrating best-of-breed technologies with a modular, open architecture design, enabling deliberate and quick integration of the applications required to support USSOCOM’s mission command requirements.

This unique, open architecture creates, shares, and manages data for mission command on a “single pane of glass” by offering a disruptive approach that enables third-party analytics.

While mission command is operations-focused, all too often the intelligence domain is overlooked. Tactical and operational Special Operations Forces (SOF) intelligence analysts have at their disposal a myriad of intelligence capabilities and resources for inclusion in their intelligence research and development process.

The capabilities span from organic to attached resources and from tactical, operational, and strategic collection and analytic capabilities. Analysts must have an understanding of these capabilities in time and space while understanding them in a geospatially referenced picture.

Creating a user-defined digital ecosystem from multiple-source information would focus the analyst by visualizing structured and unstructured data while playing out scenarios from M&S and gaming capabilities.

Joint All-Domain Command and Control (JADC2)

JADC2 is the initiative to replace the current domain and control systems with one that connects the existing sensors and shooters and distribute the available data to all domains (sea, air, land, cyber, and space) and forces that are part of the U.S. military.

According to a 04 June 2021 Congressional Research Service article, “Joint All-Domain Command and Control (JADC2),” “JADC2 envisions providing a cloud-like environment for the Joint Force to share intelligence, surveillance, and reconnaissance data, transitioning across many communications network, to enable faster decision-making.”

Across the Joint Force, the intelligence and operational architectures are separate and, in many cases, not fully integrated to give commanders the best operational and intelligence “single pane of glass” picture to support mission command.

While separate architectures are understandable, from a security and information assurance perspective, the ability to integrate the most relevant data into a single digital ecosystem is critical for commanders, at all echelons, to have the most current information to help reduce risk to mission and risk to force.

The missing component in the JADC2 article is the ability to visualize that fully integrated data into a “pane of glass.”

Conclusion & CAE Vision

Rapid advancements of computing power and other rapid technological advances will not solve or advance intelligence analytic tradecraft. These advances set the stage for full immersion and adoption of a digital ecosystem that provides intelligence analysts with advanced tools for reducing the ambiguity, uncertainty, and reliability of disparate data.

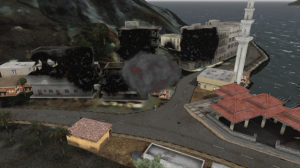

CAE is aggressively exploiting rapid technological advancements in 3D, synthetic environments, and game engines to develop capabilities that enable advance intelligence analysis by providing a user-defined (tailorable) digital ecosystem that provides analysts with advanced visualization and decision-support tools to visualize threat data and scenarios.

CAE’s vision is to address the “fog” of information by leveraging our advanced 3D, M&S, visualization, and AR capabilities to offer the analysts a digital environment to achieve data superiority.

The framework utilizes our advancements and capabilities to model enemy behavior by applying high-performance computing, AI reasoning engines, and probability theory to expose patterns of life and highlight anomalies to advance analytic tradecraft.

About CAE

CAE Inc. is a high technology company and leading provider of training, simulation, and operational support across multi-domain operations. The company leverages its M&S expertise to create open, integrated, and digitally immersive synthetic environments designed to support planning, analysis, training, and operational decision-making. CAE USA is the largest segment within CAE Inc.’s Defense & Security business unit with specific responsibility for serving the United States, South America, and select international markets.

About IC Insiders

IC Insiders is a special sponsored feature that provides deep-dive analysis, interviews with IC leaders, perspective from industry experts, and more. Learn how your company can become an IC Insider.