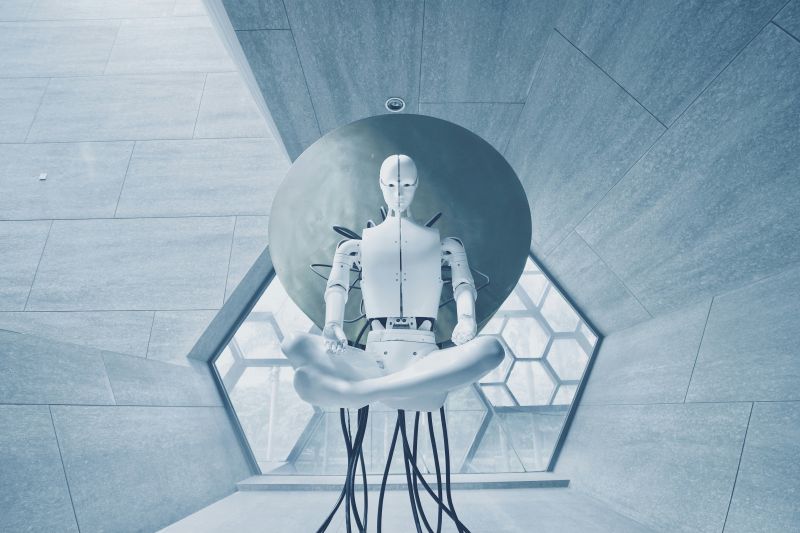

On June 28, the Defense Advanced Research Projects Agency (DARPA) announced the Shared-Experience Lifelong Learning (ShELL) program. Responses are due by 4:00 p.m. Eastern on July 27.

DARPA is issuing an Artificial Intelligence Exploration (AIE) Opportunity inviting submissions of innovative basic or applied research concepts in the technical domain of lifelong learning by agents that share their experiences with each other. This AIE Opportunity is issued under the Program Announcement for AIE, DARPAPA-20-02. All awards will be made in the form of an Other Transaction (OT) for a prototype project. The total award value for the combined Phase 1 base and Phase 2 option is limited to $1,000,000 per proposal. This total award value includes Government funding and performer cost share, if required or if proposed.

Lifelong Learning (LL) is a relatively new area of machine learning (ML) research, in which agents continually learn as they encounter varying conditions and tasks while deployed in the field, acquiring experience and knowledge and improving performance on both novel and previous tasks1 . This differs from the train-then-deploy process for typical ML systems, which often results in:

- Unpredictable outcomes when input conditions not representative of training experiences are encountered

- Catastrophic forgetting of previously learned knowledge useful for new instances of previously learned tasks

- The inability to execute new tasks effectively, if at all

Current LL research assumes a single, independent agent that learns from its own actions and surroundings; it has not considered populations of LL agents that benefit from each other’s experiences. Furthermore, the algorithms used for LL typically require large amounts of computing resources, including server farms, GPUs, and other resource-consuming hardware, and typically do not have to address communication resource limitations.

Shared-Experience Lifelong Learning (ShELL) program extends current LL approaches to large numbers of originally identical agents. When these agents are deployed, they may encounter different input and environmental conditions, execute variants of a task, and therefore learn different lessons. Other agents in the population could benefit if what is learned by one agent could be shared with the other agents. Such sharing of experiences could significantly reduce the amount of training required by any individual agent. Sharing might follow policies based on task, environmental or contextual similarity or difference, physical proximity, timing, or other factors.

Benefits would be demonstrated by comparing ShELL agents against baseline LL agents according to metrics such as forward and backward transfer, task recovery, and cumulative performance improvement as a function of task similarity/difference, difficulty and complexity. In ShELL:

- A population of agents in some initial condition is deployed and undergoes continuous and potentially asynchronous LL from the distinct inputs and operating conditions that each agent encounters

- These agents share what they have learned with other agents, thereby providing the agents useful knowledge covering a variety of situations

- The sharing topology, timing, and algorithms reflect hardware resource constraints and communication costs

ShELL delivers “lifelong learning at the edge” capabilities, in which the LL agents operate on size-weight-and-power (SWAP) and computing-constrained platforms with limited communications, while continually learning and sharing useful knowledge and experience while deployed and executing their tasks.

Read the full ShELL opportunity description on SAM.

Source: SAM